Scaling Pains: Why Your SSE App Fails Under Load Balancers

Scaling Pains: Why Your SSE App Fails Under Load Balancers

The Hidden Cost of Real-Time: Managing Stateful Connections

Developing real-time applications using Server-Sent Events (SSE) is an excellent way to deliver data efficiently. However, the moment you move your backend service beyond a single instance and deploy it behind a Load Balancer, you inevitably encounter the Statefulness Trap.

This article breaks down why any SSE application breaks when scaled horizontally across multiple server nodes and presents robust, language-agnostic architectural patterns to solve the problem without compromising performance.

The Core Conflict: In-Memory State vs. Horizontal Scaling

Understanding SSE and Statefulness

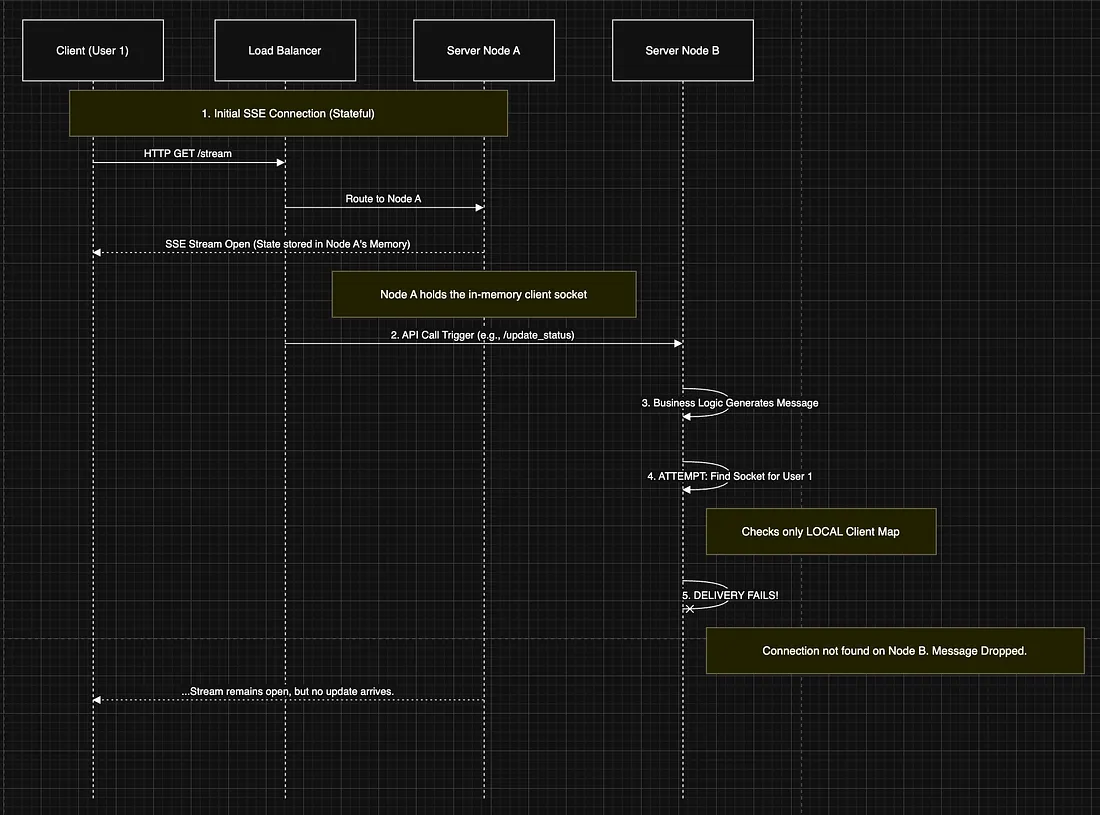

SSE relies on a single, long-lived HTTP connection where the server pushes data to the client. When a user connects to a specific server node (say, Node A):

- The operating system and the backend framework establish and maintain the continuous socket connection.

- The server process (Node A) holds the unique reference (the stream handler or connection object) for this client in its local memory.

- Your application maintains a list or map of active clients — this state is local to Node A.

This design makes your backend inherently stateful. The state (who is connected and where) is tied to the physical server process.

The Problem: A user is connected to Node A. A downstream service or an API request routed to Node B triggers a data update intended for that user. Node B checks its own local, in-memory client list, finds nothing, and the message fails to send, resulting in a silent failure for the user.

Approach 1: The Quick Fix (Sticky Sessions)

The simplest solution is to ensure the Load Balancer always routes a specific client’s traffic to the same server node it originally connected to.

Mechanism: Configure the Load Balancer (e.g., HAProxy, ALB) to use Sticky Sessions (based on IP hash or a session cookie). Once the user establishes an SSE connection with Node A, all future requests, including the long-lived SSE stream, will consistently go to Node A.

Advantages:

- Simple Setup: Requires no change to the backend app code.

- Immediate Fix: Bypasses the statefulness problem instantly.

Disadvantages:

- Load Imbalance: Nodes can become disproportionately loaded, leading to inefficient resource use.

- No High Availability: If the sticky node crashes, all connected clients are dropped, leading to service disruption.

Approach 2: The Robust Solution (Centralized Messaging)

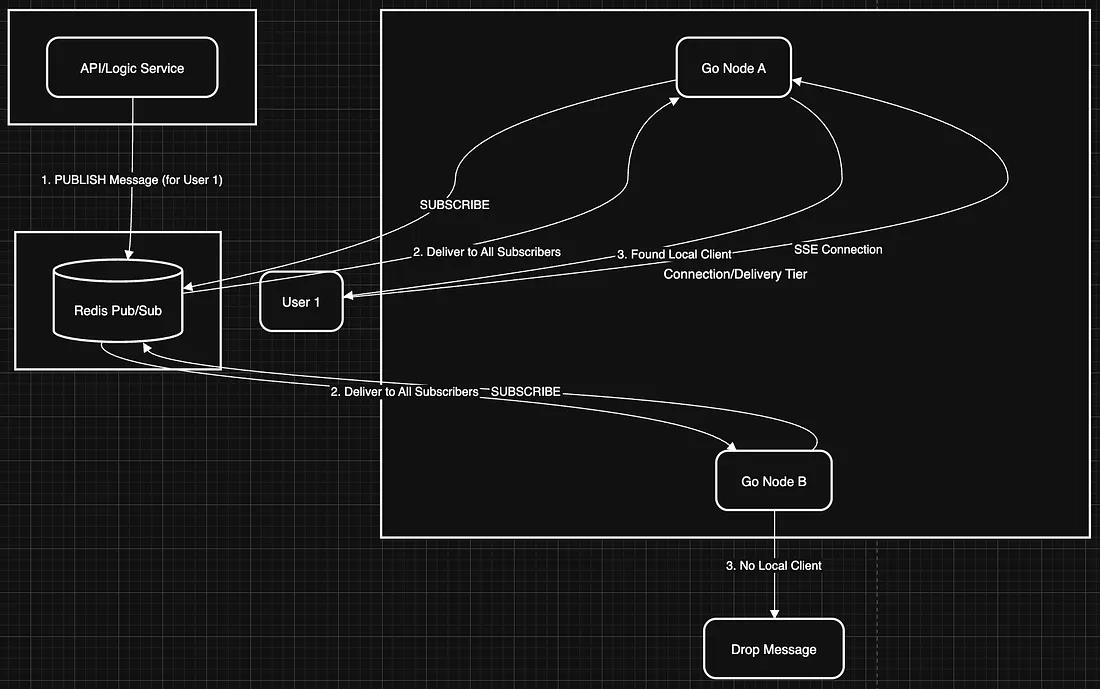

To achieve true horizontal scaling and decoupling, we must externalize the message passing process using a shared, distributed message bus. Technology Choice: Redis Pub/Sub, NATS, or Kafka

We introduce a message broker (Redis Pub/Sub or NATS) that all server nodes can access.

Mechanism:

-

Subscription: Every server node (A, B, C…) subscribes to relevant channels on the message bus.

-

Publishing: The node that generates the data (Node B) publishes the message to the central channel, without caring where the client is.

-

Delivery: All subscribed nodes receive the message. Only Node A, which holds the active, in-memory connection reference for that client, forwards the data through the SSE stream. Other nodes silently discard the message.

Approach 3: Advanced Decoupling (Dedicated Broadcast Layer)

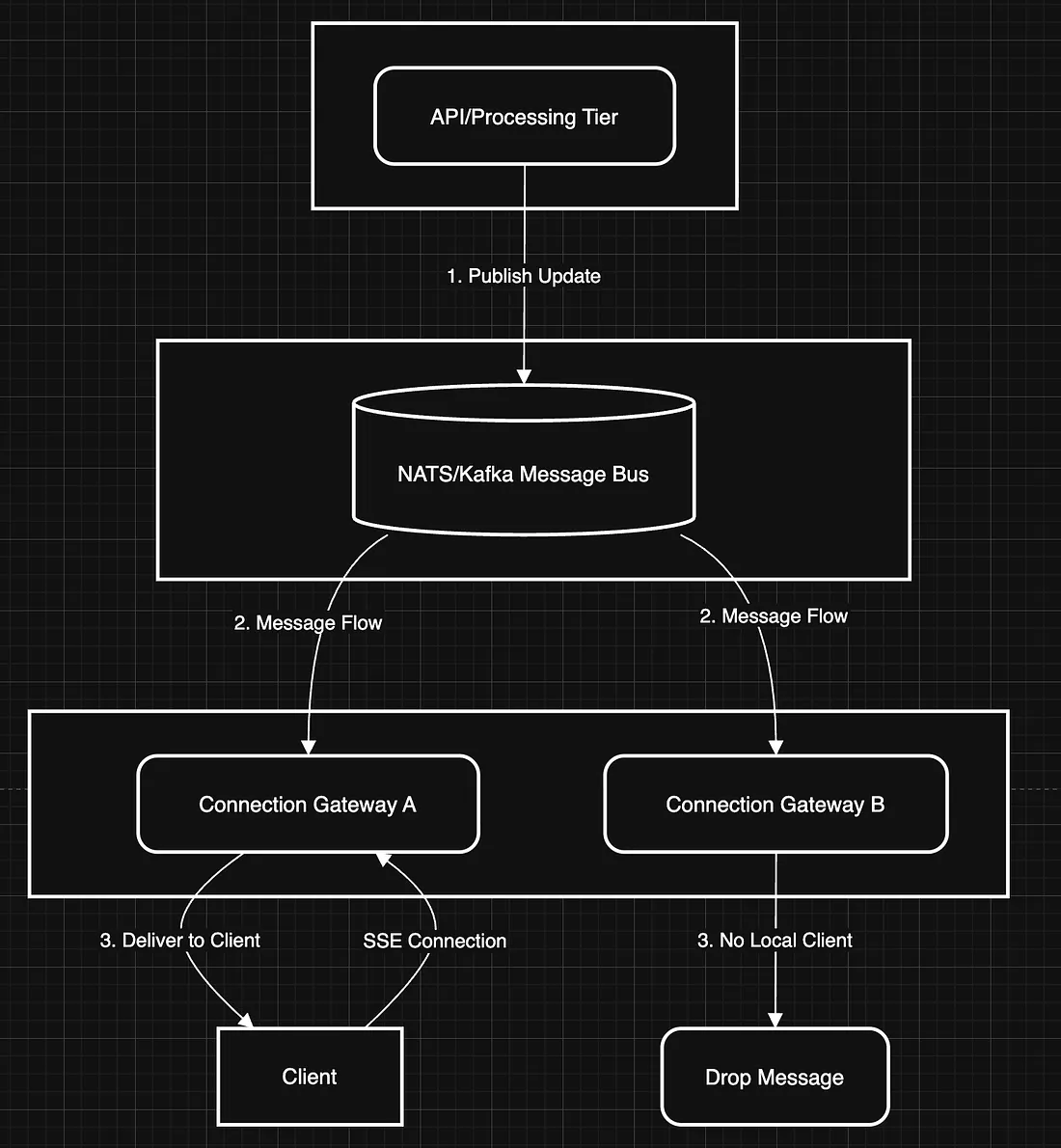

For large-scale, high-performance microservice environments, we separate responsibilities into two dedicated tiers, building upon the Pub/Sub model.

The Two Tiers:

-

API/Processing Tier (Publisher): Handles all business logic and computationally intensive tasks. It only publishes messages to the centralized bus.

-

Connection/Gateway Tier (Subscriber): This tier is specialized to handle only the incoming SSE connections. It consumes messages from the bus and performs the final delivery to the client’s socket.

Key Benefit: You can scale the CPU-intensive API tier independently of the memory/connection-intensive Gateway tier, optimizing resource usage and isolating failure domains.

Handling Node Failure (The Graceful Disconnect)

When a server node must shut down (e.g., deployment or autoscaling down), the goal is a graceful failure to ensure clients reconnect immediately.

Server Responsibility: Upon receiving a termination signal (like SIGTERM), the server process must actively close all open SSE connections. Closing the connection is critical because it avoids long timeouts and immediately signals the client.

Client Responsibility: The standard browser EventSource object is designed for resilience. When the connection is explicitly closed or drops, the client automatically:

- Triggers an error event.

- Waits a brief, often randomized interval (usually a few seconds).

- Attempts to reconnect by issuing a new HTTP request.

The Load Balancer routes this new request to a different, healthy node, and the user’s stream resumes seamlessly, often within seconds.

Conclusion: Choose Your Compromise

While Sticky Sessions are an accessible first step, they should be avoided in production environments where high availability and perfect load distribution are essential.

For any serious, scalable SSE application, adopting a Centralized Messaging architecture (Approach 2 or 3) is mandatory. It decouples the state of the connection from the logic of the message, allowing your backend services to truly scale horizontally, regardless of the language they are written in.